Facial expression recognition in patients with type 2 diabetes mellitus

Introduction

The incidence of type 2 diabetes mellitus (T2DM) has gradually increased, and has become a worldwide public health problem seriously threat to human health. T2DM is a metabolic disease that can lead to multiple structural and functional changes in multiple tissues and organs. T2DM can cause central nervous system dysfunction, especially cognitive dysfunction, such as dysfunction in attention, execution and memory (1,2), and has attracted wide attention. The risk of dementia in T2DM is 60% higher than that in non-diabetic (3). Diabetic cognitive impairment is an intermediate link between normal cognition and Alzheimer’s disease (AD), possibly a transitional state of AD. Diabetes accelerates brain aging and increases the risk of dementia (4).

Emotion is an important part of psychological process and an important factor affecting individual behavior. Facial expression is the external expression of emotion and an important way for people to communicate and express. Facial expression recognition is an important social cognitive skill of social harmony. Facial expressions are usually evaluated not only for recognizing emotional information expressed on the face, but also for classification, working memory, attention and so on. Therefore, impairment in these cognitive processes in T2DM may affect facial expression recognition.

Studies have shown that cognitive impairment has difficulty in identifying anger, pleasure and fear expressions compared with the same age group (5). Patients with mild to moderate AD have deficiencies in identifying sad, surprised and aversive expressions (6). Shimokawa et al. suggested that there is a close relationship between intellectual ability and the capacity to recognize emotional situations because without sufficient intellectual ability, it is difficult to comprehend the nature of a given situation or the appropriate emotional state that one would experience in that situation (7). T2DM affects cognitive function, whether facial expression recognition deficits and attention bias exist in T2DM is unknown and no relevant reports. Visual search is a complex cognitive process and an important process of attention prior to information processing. Attention has a very important role in visual search, which can be said to be the internal driving of visual search. Attention controls the gaze and squint of the visual search process. Facial expression search task, evolved from visual search task, is a commonly used paradigm for measuring emotional processing. The subjects were presented with a series of emoticon image matrices. The task of the subjects was to search for the target expressions from the interfering expressions, which is a top-down, conscious cognitive processing task.

This study we used facial expression search task to search for emotional targets in neutral distracters and studied the discriminating characteristics of facial expression information in middle-aged people with T2DM and normal middle-aged people. Given that the accurate detection of the emotional states of others is essential to appropriate interactions, it is important to comprehend the facial expression recognition feature of type 2 diabetic patients. We hypothesized that people with T2DM had different levels of facial recognition impairment, and the impairment was associated with deficits in their cognitive functioning (such as attention) (1,2).

Methods

Subjects and groups

This study was approved by the Ethics Committee of Shandong Provincial Hospital Affiliated to Shandong University (No. 2018-215) and we have obtained informed consent from all subjects. We selected 30 middle-aged patients with simple T2DM with poorly controlled blood glucose (HbA1c >6.5%), aged 40–60, with an average of (48.56±6.31) years, 15 males and 15 females, all of whom were right-handed. They had been educated for at least 6 years and score of the Mini Mental State Examination >23, The Hamilton Depression Scale (HAMD) <7 and the Hamilton Anxiety Scale (HAMA) <6. Diabetic patients met the WHO diabetes diagnostic criteria 1999 (8). 30 healthy middle-aged volunteers were selected matched by sex, age, length of education, body mass index, MMSE, HAMD and HAMA. All subjects were right-handed, aged 40–60 years, with an average age of (47.52±6.72) years. There were 14 males and 16 females, all of whom were right-handed.

Exclusive criteria: (I) neurological diseases (transient ischemic attack, stroke, Parkinson’s disease, epilepsy, etc.); (II) mental disorders (mania, depression, anxiety, dementia, etc.); (III) history of alcohol dependence and drug abuse; (IV) taking drugs affecting cognitive function (benzodiazepines, etc.); (V) thyroid disorders; (VI) liver or kidney insufficiency; (VII) visual impairment.

Biochemical indicators and glycosylated hemoglobin (HbA1c)

After fasting for 8 hours, 4 mL of fasting venous blood was extracted from all the subjects in the morning and placed in the EDTA tube. These samples were quickly sent to the laboratory to measure the total cholesterol, triglyceride, low density lipoprotein. Two mL blood was collected from the EDTA tube for HbA1c detection using high performance liquid chromatography.

Experimental procedure

On an IBM-compatible laptop running Windows XP, the computer has a 14-inch screen, and the facial expression search task is programmed by using E-Prime 2.0 experimental software. Emotional pictures are classified into happy, neutral and sad types according to dimensionality orientation, that is, according to emotional valence (Figure 1). The computer screen presents stimuli at 1,024×768 pixels.

Subjects were allowed to sit in front of a computer in a quiet room, and each subject’s vision was normal or corrected to a normal level. The center of the computer screen first presented a fixation point (“+”) of 500 milliseconds. The subject’s eyes were 50 cm away from the screen and are started at the fixation point. Then a matrix consisting of a plurality of cartoon face pictures was presented. The neutral face was used as the interference stimulus. The stimulus display was based on an imaginary 4×4 matrix, defining 16 possible positions for the target. Each display contained a target stimulus or no-target stimulus. The target stimulus was a positive expression (happy) or negative emotion (sad), 1, 2, 4, 8 or 16 (none target stimulation) or 0, 1, 3, 7, 15 (with target stimuli) neutral emotional expression (neutral). Cartoon face pictures at least 1 and up to 16. In each trial, targets (positive or negative expressions) and collection size (1, 2, 4, 8 or 16) were randomly selected and each condition was limited to 32 trials in 170 experimental trials. The location of target stimulation and distraction stimulation for each trial was also randomly selected. Let the subjects judge whether all faces in the matrix are the same and whether there was a differential expression (positive or negative expression). If there was a difference expression, press “/” key, if there is no difference expression, press “Z” key. On the basis of accurate response as soon as possible, the pictures immediately disappeared after the key reaction, and began the next round of experiments. The experiment was divided into 3 units. The first unit was an exercise. The subjects were familiar with keys and faces. It took about 1 minute to complete, a total of 10 times, and the computer gave feedback on the correct rate. The second and third units were formal experiments. Each unit has 80 rounds, each takes about 10 minutes. Response time was automatically recorded on the computer during the experiment.

RTs calculation

The average correct rate = the correct number of times/160×100%, the average accuracy of less than 95% of subjects removed. The Microsoft Excel table removes the highest and lowest RTs for each set size condition and calculates the mean and standard deviation (SD) of the resulting distribution. If the extreme RT exceeds the average of 2 SDs, it is considered an outlier and is deleted. Repeat this process until there is no abnormal value.

Statistical analysis

Data were processed by SPSS 20 software. The measurement data were expressed in terms of mean ± SD. General data, like the gender of the diabetic group and the normal group was compared by chi-square test, and the differences in age, sex, education level, BMI, blood pressure, triglyceride, cholesterol, low density lipoprotein, MMSE, HAMD, HAMA scores were tested by independent sample t-test. The facial expression search task was used a three-factor analysis of variance of group & expression & face number. P<0.05 was considered statistically significant.

Results

General information

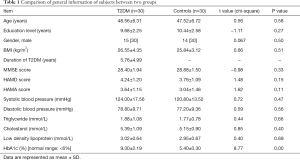

There was no significant difference in age, sex, education level, BMI, blood pressure, triglyceride, cholesterol, low density lipoprotein, MMSE, HAMD, HAMA scores between the diabetic group and the control group. The general information of subjects was compared between two groups, see the Table 1.

Full table

Facial expression search task

The main effect and interaction effect of group, expression and face number were analyzed by three-way analysis of variance. The results showed statistically significant difference in the main effect of group (F =132.98, P=0.00) and the main effect of panel number (F =130.40, P=0.00). The main effect of the expression (F =165.14, P=0.00), the interaction between the group and the number of faces (F =6.83, P=0.00), the interaction between the number of faces and the expression (F =15.65, P=0.00), the interaction between group and expression (F =0.92, P=0.34), group & face number & expression interaction (F =0.18, P=0.95) were not statistically significant.

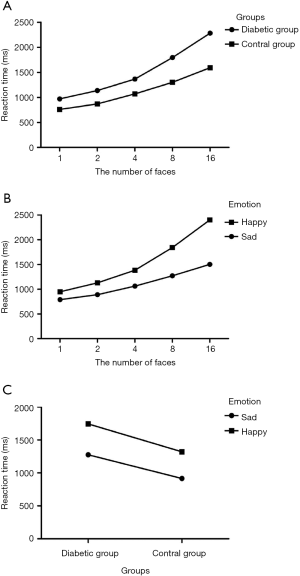

The reaction time of the diabetic group was longer than that of the control group (F =132.98, P=0.00). With the increase of the number of faces, the reaction time of the diabetic group and the control group was prolonged (F =6.83, P=0.00) (Figure 2A). The response time for recognizing positive expressions (happy) was longer than that of negative expressions (sad) (F =165.14, P=0.00). As the number of faces increases, the reaction time between positive expressions (happy) and negative expressions (sad) was prolonged (F =15.65, P=0.00) (Figure 2B). It could be seen from the group & expression outline map that two nearly parallel lines appear on the contour map, indicating that the group had no interaction with the expression, and the reaction time of the diabetic group and the control group to recognize the positive expression (happy) was longer than that of the negative expression (sad) (F =165.14, P=0.00), showing negative attention bias; diabetes group recognition positive expression (happy) and negative expression (sad) reaction time was significantly longer than the control group (F =132.98, P=0.00) (Figure 2C).

The search slope for happy emotions was 419.14 ms in the diabetic group (see the Figure 3A) and for sad emotions was 237.97 ms (Figure 3B). The search slope for happy emotions in the control group was 300.4 ms (Figure 3C), and for sad emotions in the control group was 119.07 ms (Figure 3D). Two groups’ search for happy expression and sad expression was serial search.

Discussion

The results of the facial expression search task showed that the happy expression recognition time of the diabetic group and the control group was significantly longer than that of the sad expression, and both showed negative attention bias This conclusion is consistent with the discovery by the Hansen brothers (9) that angry expressions are more detectable than pleasant ones in search tasks. Two groups’ search for happy expression and sad expression was serial search, Therefore, the results of this experiment do not support Hansen’s conclusion that the search for angry expressions is automatic, but for happy expressions is serial. First, one problem that explains Hansen’s findings is that the slope difference between happy and angry targets may be due to slower searches in angry distractors, not happy distractors. It may be more difficult to search through angry expressions, and this experiment uses neutral expressions as interference. Secondly, in the facial expressions used in the original Hansen study, there were various unrelated visual cues, such as black spots on the chin of an angry face, and Purcell et al. used the same grayscale version of the photograph used by Hansen to remove the contrast illusion, but did not come to the same conclusion (10).

Attention was defined as a cognitive process that selectively concentrated on one aspect of the environment while ignoring other aspects, it is information processing process of cognitive activities. keeping, distribution and transformation of attention is crucial for the speed and efficiency of the brain from received external stimuli to useful information process.

Emotions affect attention in many ways. From the point of view of biological evolution, in order to survive in the environment, organisms often give priority to negative information, especially threatening stimuli, so as to enable them enter attention and consciousness, that is, attention bias. Attentional bias refers to the high sensitivity of individuals to specific stimuli with selective attention. Enhanced perceptual processing through selective attention is thought to be a top-down modulation of the sensory cortex by a high level of the parietal and frontal cortex (11). Fox believes that attentional bias has two explanations (12): firstly, in the initial orientation, attention is attracted to the position of threat-related stimuli, that is excessive alertness to threatening stimuli; and secondly, threat-related stimuli affect the duration of attention maintenance or the ability to relieve attention, increasing attentional retention time and attention that is liberated from threatening stimuli. Negative stimuli can capture attention more effectively than positive stimuli. Most attention bias is caused by negative stimuli, which compete for more attention resources and intensify the perceptual processing of the signal, reducing the focus of attention (13).

In attention experiments, emotional stimuli attract more attention or occupy more attention resources than non-emotional stimuli. That is to say, specific stimuli tend to arouse individual alertness and attention. When processed together with other stimuli, individuals tend to pay more attention to specific stimuli. In visual testing tasks, such as finding a different target in a bunch of distractions, it is much faster to find a target with emotional value, such as finding a snake or a spider in a flower (14). Moreover, from an evolutionary perspective, negative emotions are considered to be unsafe. Individuals must therefore be vigilant in the way they process information and prompt specific actions to increase their chances of survival (negative emotional people can harm us, so we should prepare for defense in advance). In an important study, humans found an angry face in a crowd much faster than a happy face in a crowd (9). This study is particularly important because it uses the visual search paradigm, which is considered a good indicator of “pre-attention” or automatic processing. In a typical visual search task, the subject is asked to detect whether there is a specified target (such as a blue circle) under the unrelated interference (such as a red circle). If the search time does not increase significantly with the number of interferences in the display, the target is considered to be “popped” out of the array and search is considered to be automatic (15,16).

This study showed that in type 2 diabetic patients without significant impairment of clinical cognitive function, recognition of happy and sad expression response time was significantly prolonged. It may be related to the attentional impairment in T2DM (17,18).

The amygdala is often regarded as the most important emotional brain area. It is well known that the amygdala is essential for fear management and fear learning, but it is also important for a wider range of functions associated with emotional relevance (19). Neurotoxicity impairment of the amygdala neurons has a serious impact on acquiring learning to act in response to visual or auditory cues. This brain area is also involved in cognitive processes such as attention, and earlier studies described the absence of target visual tracking in monkeys with amygdala damage (20). Emotion and attention affect the activity of the amygdala by affecting the processing of emotional stimuli. The activity of the amygdala is only affected by emotional attention effect at the early stage of stimulation, and is affected by emotional attention effect and top-down attention effect at the late stage of stimulation (21). The recognition of positive expression relies on the frontal lobe—left amygdala—left cingulate gyrus, and the recognition of negative expression mainly depends on the frontal lobe—bilateral amygdala—bilateral cingulate gyrus. There is a certain connection between facial expression recognition and attention function. The amygdala and frontal lobe atrophy and gray matter decrease in diabetic individuals (22,23). Compared with normal individuals, the frontal lobe-amygdala activation is weakened in diabetic individuals during emotional processing, especially negative emotional processing.

Conclusions

In this study, we firstly used the expression search task to study the facial expression recognition characteristics of diabetic patients for the first time. The facial expression recognition of diabetic patients showed negative attention bias. The reaction time of happy and sad facial expressions was significantly prolonged in patients with type 2 diabetes. The search for happy and sad facial expressions in the diabetes group and the control group was serial search, which required attention. Further studies are required with improved experimental design and method, enlarged sample size, and combined with methods such as functional magnetic resonance, event-related potentials to clarify the mechanism of emotional expression recognition processing in T2DM.

Acknowledgments

We thank all the subjects and their families for their participation in the research reported.

Footnote

Conflicts of Interest: The authors have no conflicts of interest to declare.

Ethical Statement: The authors are accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved. This study was approved by the Ethics Committee of Shandong Provincial Hospital Affiliated to Shandong University (No. 2018-215) and we have obtained informed consent from all subjects.

References

- Reijmer YD, van den Berg E, Ruis C, et al. Cognitive dysfunction in patients with type 2 diabetes. Diabetes Metab Res Rev 2010;26:507-19. [Crossref] [PubMed]

- Toth C. Diabetes and neurodegeneration in the brain. Handb Clin Neurol 2014;126:489-511. [Crossref] [PubMed]

- Chatterjee S, Peters SA, Woodward M, et al. Type 2 Diabetes as a Risk Factor for Dementia in Women Compared With Men: A Pooled Analysis of 2.3 Million People Comprising More Than 100,000 Cases of Dementia. Diabetes Care 2016;39:300-7. [PubMed]

- Biessels GJ, Staekenborg S, Brunner E, et al. Risk of dementia in diabetes mellitus: a systematic review. Lancet Neurol 2006;5:64-74. [Crossref] [PubMed]

- Henry JD, Ruffman T, McDonald S, et al. Recognition of disgust is selectively preserved in Alzheimer's disease. Neuropsychologia 2008;46:1363-70. [Crossref] [PubMed]

- Hargrave R, Maddock RJ, Stone V. Impaired recognition of facial expressions of emotion in Alzheimer's disease. J Neuropsychiatry Clin Neurosci 2002;14:64-71. [Crossref] [PubMed]

- Shimokawa A, Yatomi N, Anamizu S, et al. Recognition of facial expressions and emotional situations in patients with dementia of the Alzheimer and vascular types. Dement Geriatr Cogn Disord 2003;15:163-8. [Crossref] [PubMed]

- Alberti KG, Zimmet PZ. Definition, diagnosis and classification of diabetes mellitus and its complications. Part 1: diagnosis and classification of diabetes mellitus provisional report of a WHO consultation. Diabet Med 1998;15:539-53. [Crossref] [PubMed]

- Hansen CH, Hansen RD. Finding the face in the crowd: an anger superiority effect. J Pers Soc Psychol 1988;54:917-24. [Crossref] [PubMed]

- Purcell DG, Stewart AL, Skov RB. It takes a confounded face to pop out of a crowd. Perception 1996;25:1091-108. [Crossref] [PubMed]

- Kastner S, Ungerleider LG. Mechanisms of visual attention in the human cortex. Annu Rev Neurosci 2000;23:315-41. [Crossref] [PubMed]

- Fox E, Russo R, Dutton K. Attentional Bias for Threat: Evidence for Delayed Disengagement from Emotional Faces. Cogn Emot 2002;16:355-79. [Crossref] [PubMed]

- Rowe G, Hirsh JB, Anderson AK. Positive affect increases the breadth of attentional selection. Proc Natl Acad Sci U S A 2007;104:383-8. [Crossref] [PubMed]

- Ohman A, Flykt A, Esteves F. Emotion drives attention: detecting the snake in the grass. J Exp Psychol Gen 2001;130:466-78. [Crossref] [PubMed]

- Treisman A, Souther J. Search asymmetry: a diagnostic for preattentive processing of separable features. J Exp Psychol Gen 1985;114:285-310. [Crossref] [PubMed]

- Treisman AM, Gelade G. A feature-integration theory of attention. Cogn Psychol 1980;12:97-136. [Crossref] [PubMed]

- Manschot SM, Brands AM, van der Grond J, et al. Brain magnetic resonance imaging correlates of impaired cognition in patients with type 2 diabetes. Diabetes 2006;55:1106-13. [Crossref] [PubMed]

- Messier C. Impact of impaired glucose tolerance and type 2 diabetes on cognitive aging. Neurobiol Aging 2005;26 Suppl 1:26-30. [Crossref] [PubMed]

- Sander D, Grafman J, Zalla T. The human amygdala: an evolved system for relevance detection. Rev Neurosci 2003;14:303-16. [Crossref] [PubMed]

- Bagshaw MH, Mackworth NH, Pribram KH. The effect of resections of the inferotemporal cortex or the amygdala on visual orienting and habituation. Neuropsychologia 1972;10:153-62. [Crossref] [PubMed]

- Pourtois G, Spinelli L, Seeck M, et al. Modulation of face processing by emotional expression and gaze direction during intracranial recordings in right fusiform cortex. J Cogn Neurosci 2010;22:2086-107. [Crossref] [PubMed]

- Chen Z, Li J, Liu M, et al. Volume changes of cortical and subcortical reward circuitry in the brain of patients with type 2 diabetes mellitus. Nan Fang Yi Ke Da Xue Xue Bao 2013;33:1265-72. [PubMed]

- Nouwen A, Chambers A, Chechlacz M, et al. Microstructural abnormalities in white and gray matter in obese adolescents with and without type 2 diabetes. Neuroimage Clin 2017;16:43-51. [Crossref] [PubMed]